0 Comments

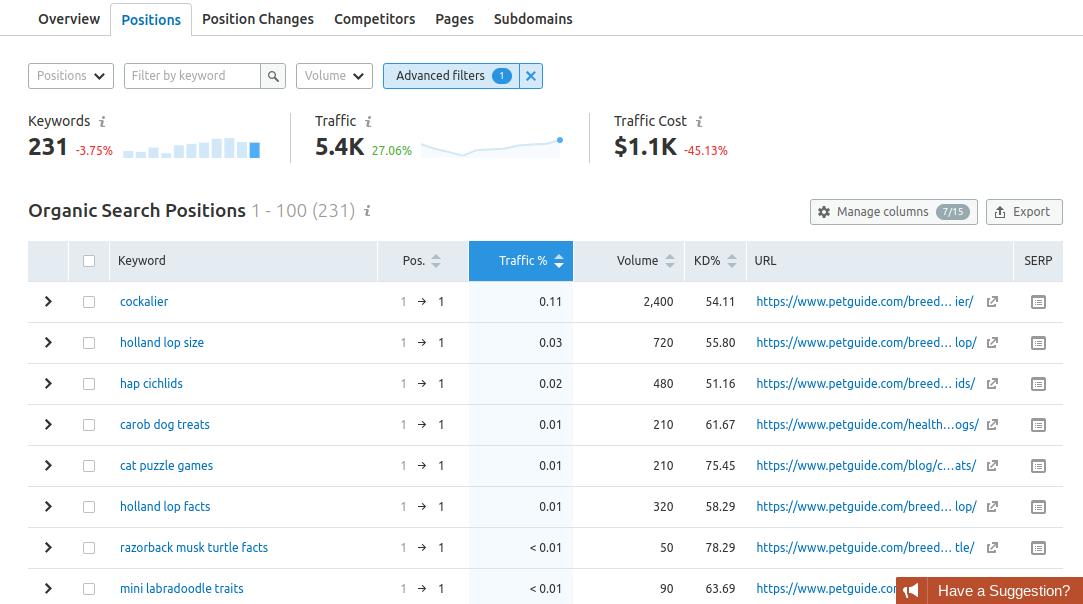

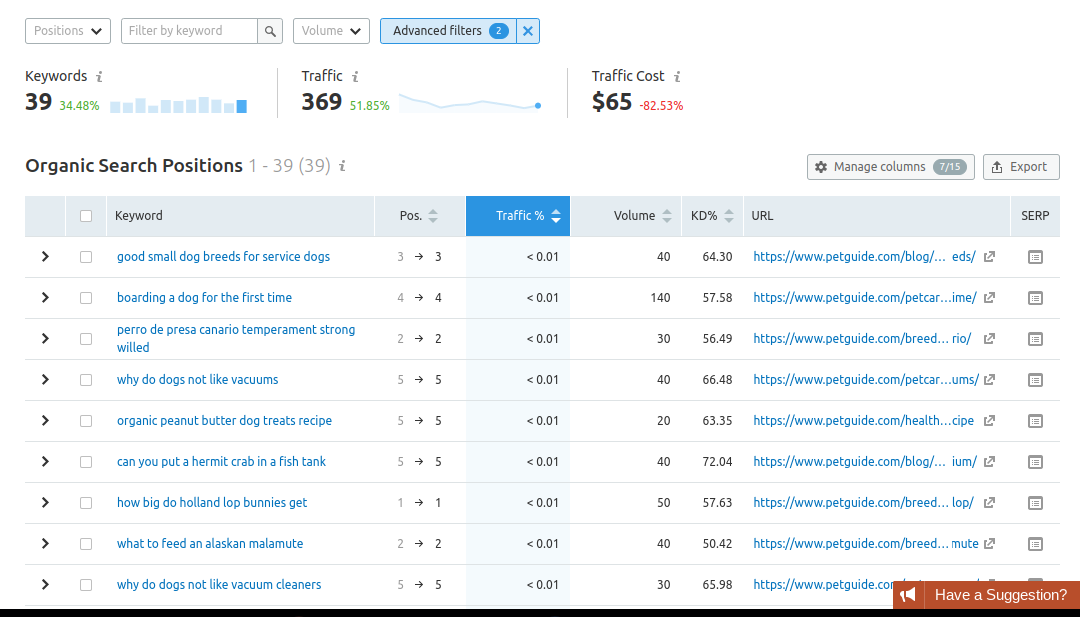

Now, after reading the title, you can think, “What new can I read here? At least every month I see similar articles on different blogs”. I can say without a doubt you’ll definitely like this post. My article is developed on the basis of unique research. Every SEO specialist checks a site with the help of some SEO service. I work at one of the most popular all-in-one SEO platforms — Serpstat. Every year our team analyzes site audit results of our users to find out which SEO errors are really the most common. In this article, I’ll shed light on the results we’ve got for the last year. Serpstat research: Results we’ve gotDuring 2018, our users carried out 204K audits and checked 223M pages through Serpstat. Our team analyzed this data and collected the stat. All stat you can see on the infographics below the text. I just want to specify some facts in words here. After the research, we’ve discovered that most sites had problems with meta tags, markups, and links. The most common errors are concerned with headlines, HTTPS certificate, and redirects. Issues with hreflang, multimedia, content, indexing, HTTP status codes, AMP (accelerated mobile pages), and loading time were least likely. Also, we’ve analyzed country-specific domains to get more exact information. The stat we’ve got from it shows that 70% of “.com” domains have the most common problems with links, loading time, and indexing. The same situation is with “.uk” and “.ca” domains. The most common mistakes and how to fix them1. Meta tagsMeta tags are rather important despite the fact they aren’t visible to website users. They tell search engines what the page is about and take part in snippets creation. Meta tags affect your website ranking. Errors which can occur with them may spoil user signals. According to our research, you should first check the length of the title and description itself. 2. Links, markups, and headingsExternal links (their number and quality) affect your site’s position in SERP as search engines rate link profiles very carefully. Also, you should always remember about internal links factors (nofollow attributes and URL optimization). The Serpstat team also found out that bugs with markups and headings are rather popular ones despite the fact that they are very important for websites. Markups and headings contain attributes which mark and structure the data of the page. They also help search engines and networks crawl and display the site correctly. The most common errors in this chapter are with:

3. HTTPS certificateThis certificate is one of the important ranking factors as it ensures a secure connection to the website and the browser. If your website uses personal information, don’t forget to pay attention to it. The most common mistake here is the referral of HTTPS website to HTTP one. 4. Redirects, hreflang attribute, multimediaRedirects direct users from the requested URL to another one you need. According to our statistics, you should avoid the most common error with them — having a multilingual interface it’s necessary to apply the hreflang attribute for the same content in different languages. In such a way search engines can understand which version of your texts users prefer. Multimedia elements don’t affect SEO directly. Although, they can cause bad user signals and indexing errors. Also, pictures affect the website’s loading time. That’s why multimedia are rather important. And here is the same situation with the hreflang attribute — if you have the multilingual interface, you should apply it for the same content in multiple languages. More info about errors in this section you can find on the infographics. 5. IndexingSearch engines find out what sites are about while indexing. If the site is closed for indexing, users can’t find it in the SERP. Some weak spots of the site that often lead to errors are the following:

6. HTTP status codes, AMP, and contentAnswers that the server delivers on user request have the name HTTP status codes. Errors with them are rather serious problems and negatively affect the position of the site in SERPs. AMP is accelerated pages optimized for mobile devices. You should use such technologies to improve the loading time of the site. Also, poor content causes the deterioration of ranking positions. The most common problems here are:

7. Loading timeLong loading time can worsen the site’s usability and waste the crawling budget. Serpstat team found that the most common problems with this issue are associated with the use of browser cache, image, JavaScript, and CSS optimization. You can view the detailed infographic here. How to correct these errorsTo find all the above-mentioned errors for your own site, you can start a custom project at Serpstat Audit tool. Here you can check the whole site or even just a separate page. The module checks 20 pages per second and finds more than 50 errors that potentially harm your site. In its reports, Serpstat sorts errors by importance and categories and gives the list of pages on which these problems were found. In addition, it offers recommendations on how to resolve a specific problem. Some of them are not errors in the true sense (“Information”), they are only shown for you to be aware of such problems. SummaryThere are a lot of errors that can damage your site and its rankings. Despite this fact, you can find them all at once with the help of audit tools. At first, pay your attention to the most common weaknesses:

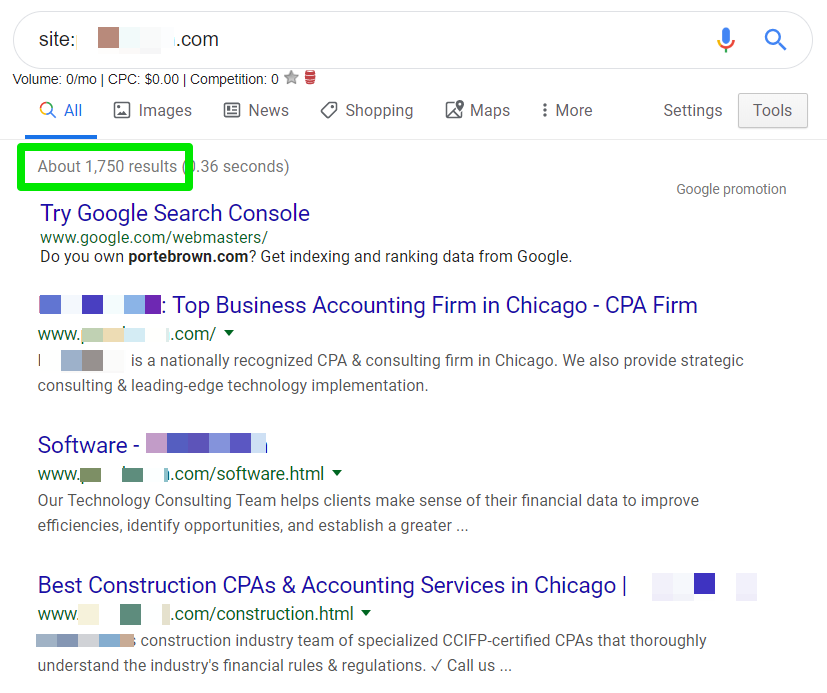

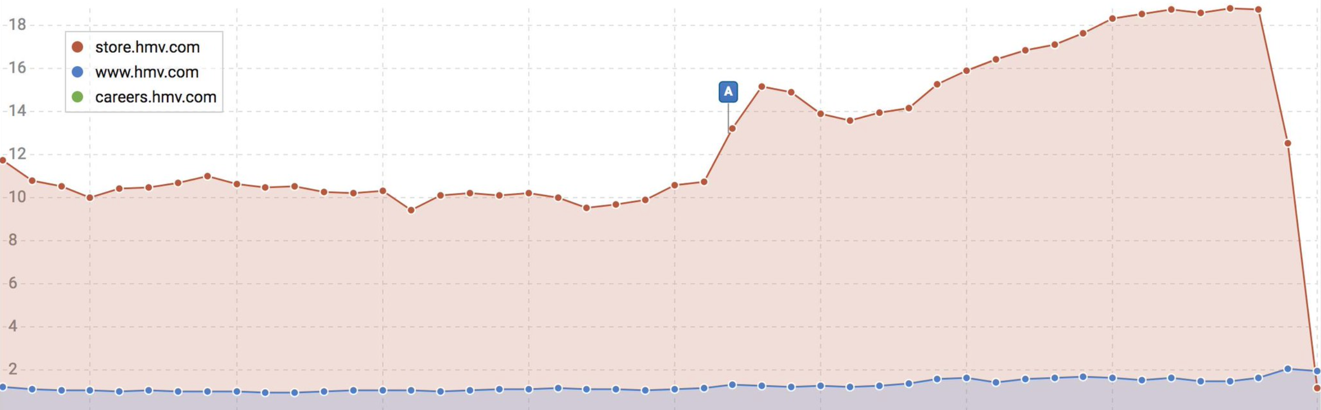

Inna Yatsyna is a Brand and Community Development Specialist at Serpstat. She can be found on Twitter @erin_yat. The post Research: The most common SEO errors appeared first on Search Engine Watch. from https://searchenginewatch.com/2019/07/17/the-most-common-seo-errors-research-infographics/ If you’re looking for a way to optimize your site for technical SEO and rank better, consider deleting your pages. I know, crazy, right? But hear me out. We all know Google can be slow to index content, especially on new websites. But occasionally, it can aggressively index anything and everything it can get its robot hands on whether you want it or not. This can cause terrible headaches, hours of clean up, and subsequent maintenance, especially on large sites and/or ecommerce sites. Our job as search engine optimization experts is to make sure Google and other search engines can first find our content so that they can then understand it, index it, and rank it appropriately. When we have an excess of indexed pages, we are not being clear with how we want search engines to treat our pages. As a result, they take whatever action they deem best which sometimes translates to indexing more pages than needed. Before you know it, you’re dealing with index bloat. What is the index bloat?Put simply, index bloat is when you have too many low-quality pages on your site indexed in search engines. Similar to bloating in the human digestive system (disclaimer: I’m not a doctor), the result of processing this excess content can be seen in search engines indices when their information retrieval process becomes less efficient. Index bloat can even make your life difficult without you knowing it. In this puffy and uncomfortable situation, Google has to go through much more content than necessary (most of the times low-quality and internal duplicate content) before they can get to the pages you want them to index. Think of it this way: Google visits your XML sitemap to find 5,000 pages, then crawls all your pages and finds even more of them via internal linking, and ultimately decides to index 30,000 URLs. This comes out to an indexation excess of approximately 500% or even more. But don’t worry, diagnosing your indexation rate to measure against index bloat can be a very simple and straight forward check. You simply need to cross-reference which pages you want to get indexed versus the ones that Google is indexing (more on this later). The objective is to find that disparity and take the most appropriate action. We have two options:

You will find that most of the time, index bloat results in removing a relatively large number of pages from the index by adding a “NOINDEX” meta tag. However, through this indexation analysis, it is also possible to find pages that were missed during the creation of your XML sitemap(s), and they can then be added to your sitemap(s) for better indexing. Why index bloat is detrimental for SEOIndex bloat can slow processing time, consume more resources, and open up avenues outside of your control in which search engines can get stuck. One of the objectives of SEO is to remove roadblocks that hinder great content from ranking in search engines, which are very often technical in nature. For example, slow load speeds, using noindex or nofollow meta tags where you shouldn’t, not having proper internal linking strategies in place, and other such implementations. Ideally, you would have a 100% indexation rate. Meaning every quality page on your site would be indexed – no pollution, no unwanted material, no bloating. But for the sake of this analysis, let’s consider anything above 100% bloat. Index bloat forces search engines to spend more resources (which are limited) than needed processing the pages they have in their database. At best, index bloat causes inefficient crawling and indexing, hindering your ranking capability. But index bloat at worst can lead to keyword cannibalization across many pages on your site, limiting your ability to rank in top positions, and potentially impacting the user experience by sending searchers to low-quality pages. To summarize, index bloat causes the following issues:

Sources of index bloat1. Internal duplicate contentUnintentional duplicate content is one of the most common sources of index bloat. This is because most sources of internal duplicate content revolve around technical errors that generate large numbers of URL combinations that end up indexed. For example, using URL parameters to control the content on your site without proper canonicalization. Faceted navigation has also been one of the “thorniest SEO challenges” for large ecommerce sites, as Portent describes, and has the potential of generating billions of duplicate content pages by overlooking a simple feature. 2. Thin contentIt’s important to mention an issue introduced by the Yoast SEO plugin version 7.0 around attachment pages. This WordPress plugin bug led to “Panda-like problems” in March of 2018 causing heavy ranking drops for affected sites as Google deemed these sites to be lower in the overall quality they provided to searchers. In summary, there is a setting within the Yoast plugin to remove attachment pages in WordPress – a page created to include each image in your library with minimal content – the epitome of thin content for most sites. For some users, updating to the newest version (7.0 then) caused the plugin to overwrite the previous selection to remove these pages and defaulted to index all attachment pages. This then meant that having five images per blog post would lead to 5x-ing the number of indexed pages with 16% of actual quality content per URL, causing a massive drop in domain value. 3. PaginationPagination refers to the concept of splitting up content into a series of pages to make content more accessible and improve user experience. This means that if you have 30 blog posts on your site, you may have ten blog posts per page that go three pages deep. Like so:

You’ll see this often on shopping pages, press releases, and news sites, among others. Within the purview of SEO, the pages beyond the first in the series will very often contain the same page title and meta description, along with very similar (near duplicate) body content, introducing keyword cannibalization to the mix. Additionally, the purpose of these pages is for a better browsing user experience for users already on your site, it doesn’t make sense to send search engine visitors to the third page of your blog. 4. Under-performing contentIf you have content on your site that is not generating traffic, has not resulted in any conversions, and does not have any backlinks, you may want to consider changing your strategy. Repurposing content is a great way to maximize any value that can be salvaged from under-performing pages to create stronger and more authoritative pages. Remember, as SEO experts our job is to help increase the overall quality and value that a domain provides, and improving content is one of the best ways to do so. For this, you will need a content audit to evaluate your own individual situation and what the best course of action would be. Even a 404 page that results in a 200 Live HTTP status code is a thin and low-quality page that should not be indexed. Common index bloat issuesOne of the first things I do when auditing a site is to pull up their XML sitemap. If they’re on a WordPress site using a plugin like Yoast SEO or All in One SEO, you can very quickly find page types that do not need to be indexed. Check for the following:

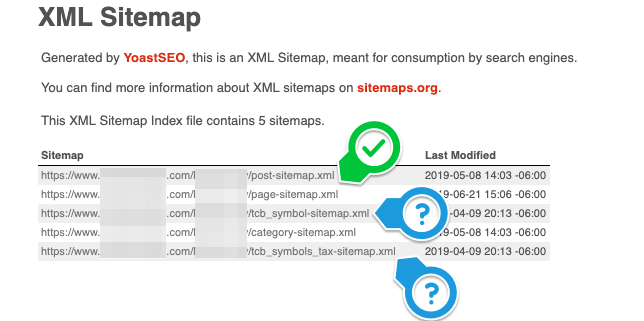

To determine if the pages in your XML sitemap are low-quality and need to be removed from search really depends on the purpose they serve on your site. For instance, sites do not use author pages in their blog, but still, have the author pages live, and this is not necessary. “Thank you” pages should not be indexed at all as it can cause conversion tracking anomalies. Test pages usually mean there’s a duplicate somewhere else. Similarly, some plugins or developers build custom features on web builds and create lots of pages that do not need to be indexed. For example, if you find an XML sitemap like the one below, it probably doesn’t need to be indexed:

Different methods to diagnose index bloatRemember that our objective here is to find the greatest contributors of low-quality pages that are bloating the index with low-quality content. Most times it’s very easy to find these pages on a large scale since a lot of thin content pages follow a pattern. This is a quantitative analysis of your content, looking for volume discrepancies based on the number of pages you have, the number of pages you are linking to, and the number of pages Google is indexing. Any disparity between these numbers means there’s room for technical optimization, which often results in an increase in organic rankings once solved. You want to make these sets of numbers as similar as possible. As you go through the various methods to diagnose index bloat below, look out for patterns in URLs by reviewing the following:

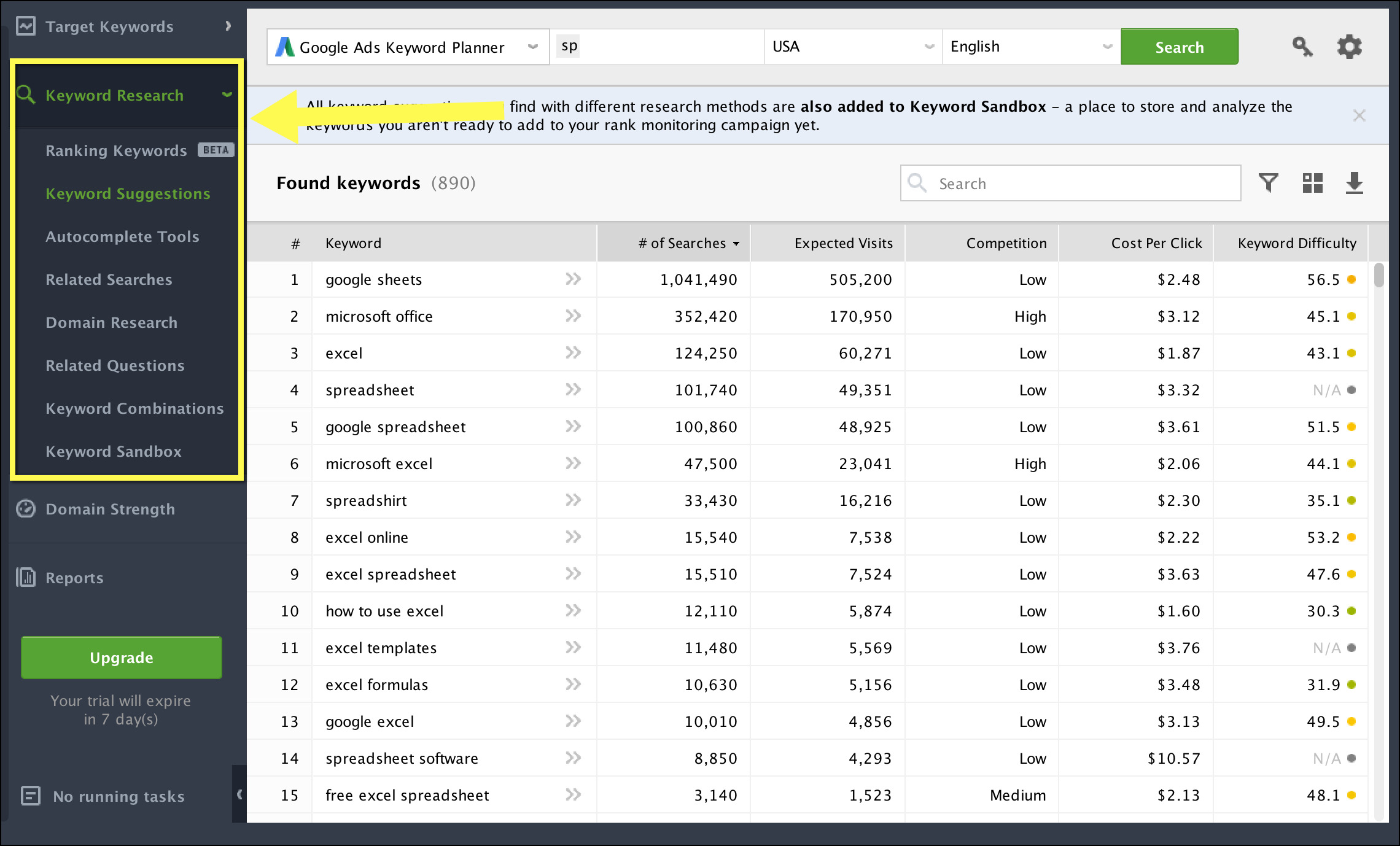

Next, I will walk you through a few simple steps you can take on your own using some of the most basic tools available for SEO. Here are the tools you will need:

As you start finding anomalies, start adding them to a spreadsheet so they can be manually reviewed for quality. 1. Screaming Frog crawlUnder Configuration > Spider > Basics, configure Screaming Frog to crawl (check “crawl all subdomains”, and “crawl outside of start folder”, manually add your XML sitemap(s) if you have them) for your site in order to run a thorough scan of your site pages. Once the crawl has been completed, take note of all the indexable pages it has listed. You can find this in the “Self-Referencing” report under the Canonicals tab.

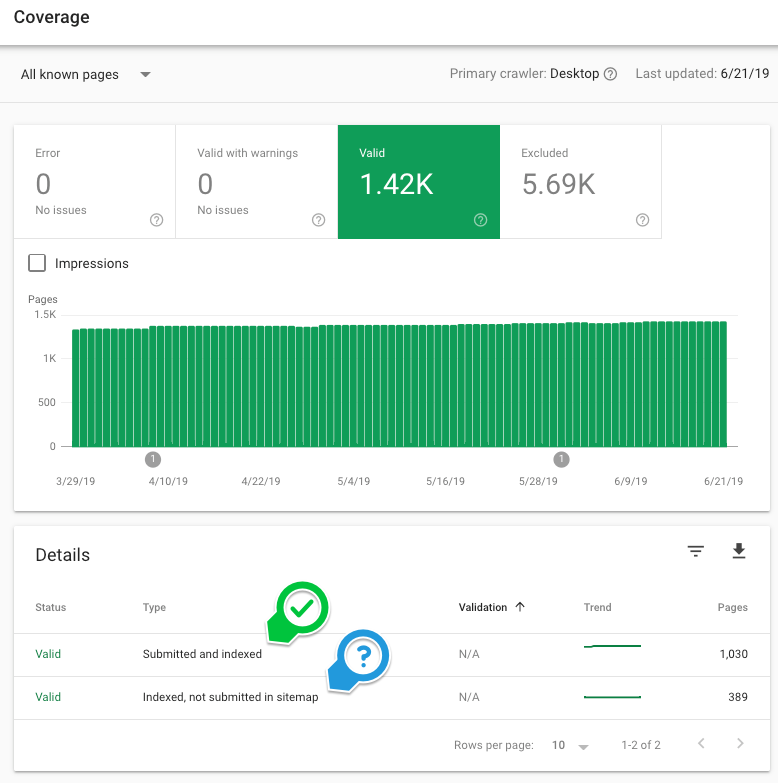

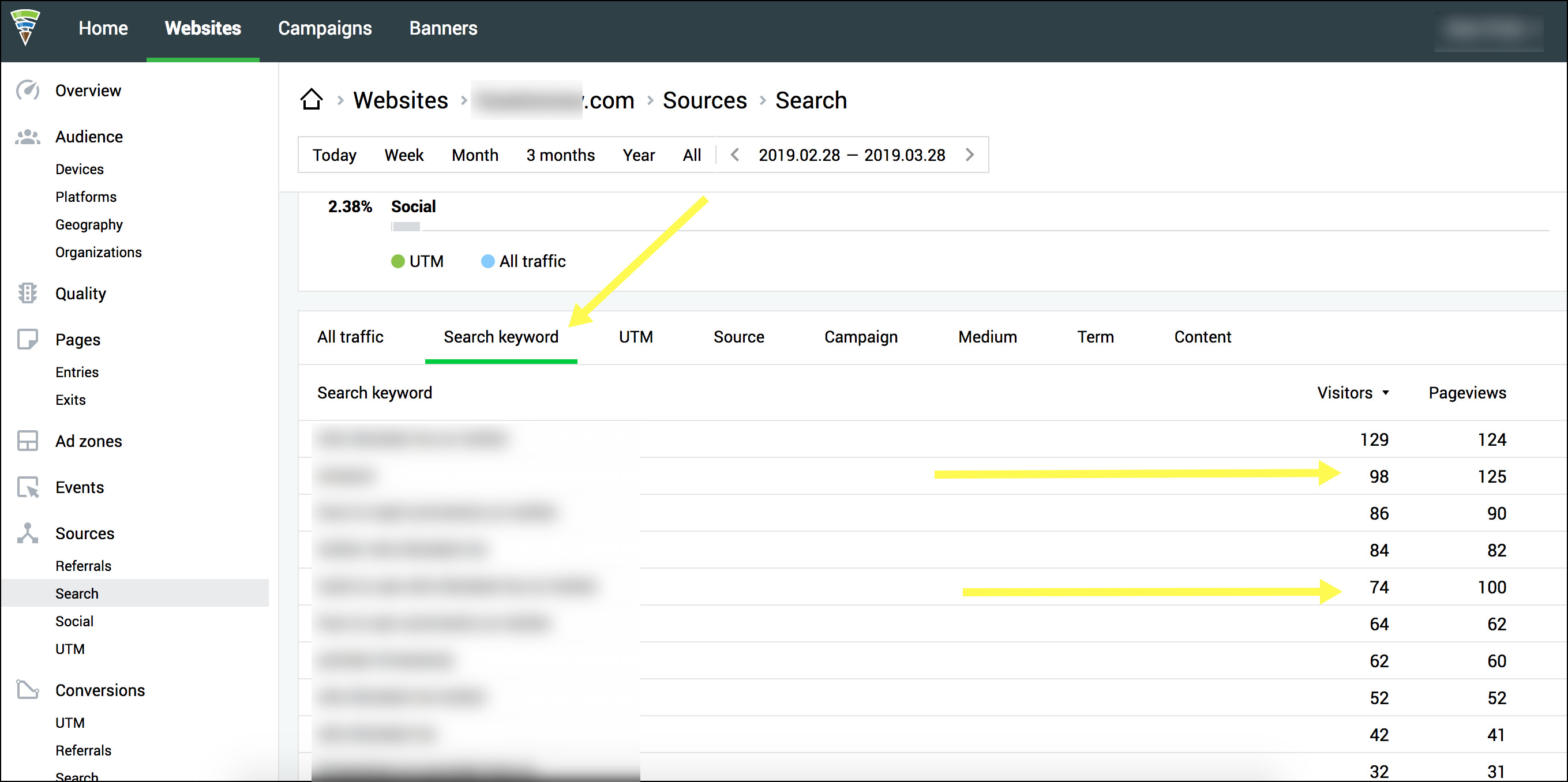

Take a look at the number you see. Are you surprised? Do you have more or fewer pages than you thought? Make a note of the number. We’ll come back to this. 2. Google’s Search ConsoleOpen up your Google Search Console (GSC) property and go to the Index > Coverage report. Take a look at the valid pages. On this report, Google is telling you how many total URLs they have found on your site. Review the other reports as well, GSC can be a great tool to evaluate what the Googlebot is finding when it visits your site.

How many pages does Google say it’s indexing? Make a note of the number. 3. Your XML sitemapsThis one is a simple check. Visit your XML sitemap and count the number of URLs included. Is the number off? Are there unnecessary pages? Are there not enough pages? Conduct a crawl with Screaming Frog, add your XML sitemap to the configuration and run a crawl analysis. Once it’s done, you can visit the Sitemaps tab to see which specific pages are included in your XML sitemap and which ones aren’t. |

ABOUT USRising Phoenix SEO is a Phoenix-based internet marketing firm. Launched in 2013 and spearheaded by Justin Blake, Rising Phoenix SEO works with local and national accounts to help them dominate their industry in their regions. |

RSS Feed

RSS Feed